AI in Healthcare without breaking HIPAA (MLJAR Studio guide)

How to Use AI in Healthcare Without Breaking HIPAA (MLJAR Guide)

Artificial intelligence is transforming healthcare. Today, it is possible to analyze patient data faster, build predictive models automatically, and generate reports in minutes instead of hours. This creates a huge opportunity for teams working with medical data. At the same time, there is one critical challenge that cannot be ignored. That challenge is HIPAA compliance. Many organizations either avoid AI completely or use it in a risky way. The truth is much simpler and more practical.

👉 You can use AI in healthcare safely. 👉 You just need the right architecture.

What is HIPAA and why does it matter in AI

HIPAA, or the Health Insurance Portability and Accountability Act, is a US law that protects sensitive patient data. It defines how protected health information, known as PHI, can be stored, processed, and shared. PHI includes any data that can identify a patient. This can be obvious information, such as names or phone numbers, but also combinations of attributes that make a person identifiable. In the context of AI in healthcare, HIPAA becomes especially important. Modern AI systems process large amounts of data, and without proper controls, this data can easily be exposed.

Definition: HIPAA compliance in AI

HIPAA compliance in AI means designing systems where protected health information (PHI) is never exposed outside controlled environments, and all data processing follows strict rules for privacy, access, and security.

This definition is important because it highlights a key idea. HIPAA compliance is not about a specific tool. It is about how your system works.

The biggest misconception about HIPAA compliance

Many people ask whether a tool is HIPAA compliant. This question appears often in discussions about AI in healthcare. However, this is not how compliance works. No tool, including MLJAR Studio or any AI platform, is automatically HIPAA compliant. Instead, compliance depends on how you use the tool and how your system is designed. This means that the same tool can be used in a safe or unsafe way depending on your workflow.

Why most AI workflows break HIPAA compliance in healthcare

Most problems come from data leaving the controlled environment. A typical mistake looks simple. Someone exports patient data and uses an external AI tool to analyze it. This might include copying data into a chatbot or sending it to an API. Even if this is done for testing, it can expose protected health information. This is exactly what HIPAA is designed to prevent. To avoid this, you need a clear and structured approach to how data flows through your system.

A simple architecture for HIPAA compliance in AI

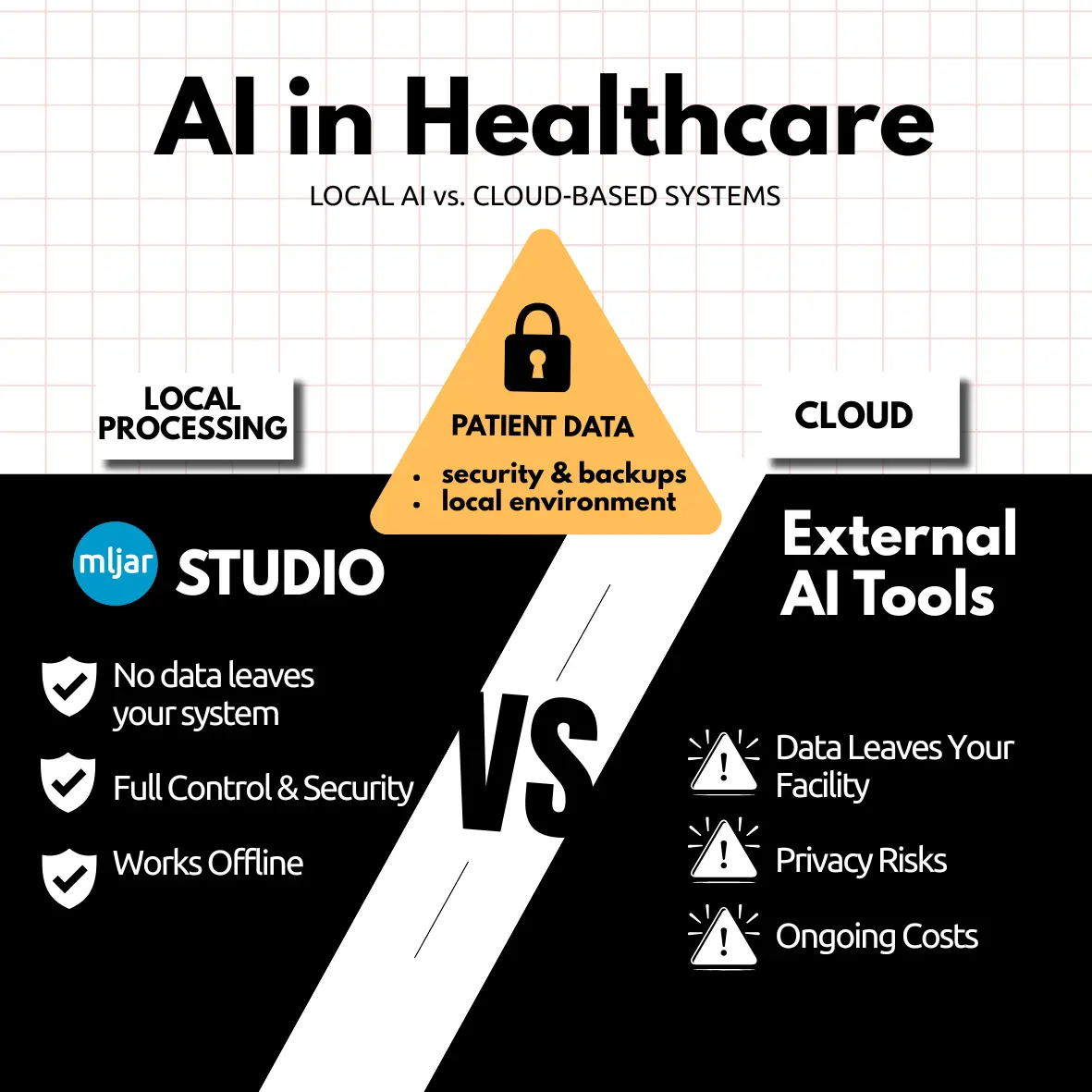

A safe system can be understood as a set of clearly separated layers. At the beginning, there is a layer where sensitive data exists. This is where raw patient information is stored. This layer must be strictly controlled, encrypted, and accessible only to authorized users. The next layer is where data processing happens. This is where MLJAR Studio fits naturally. Because MLJAR Studio can run locally, it allows you to analyze data, build models, and generate reports without sending data outside your infrastructure. There can also be a third layer where external AI tools are used. These tools are powerful, but they must only work with anonymized data. Raw patient data should never leave your controlled environment. This separation is the foundation of safe AI in healthcare.

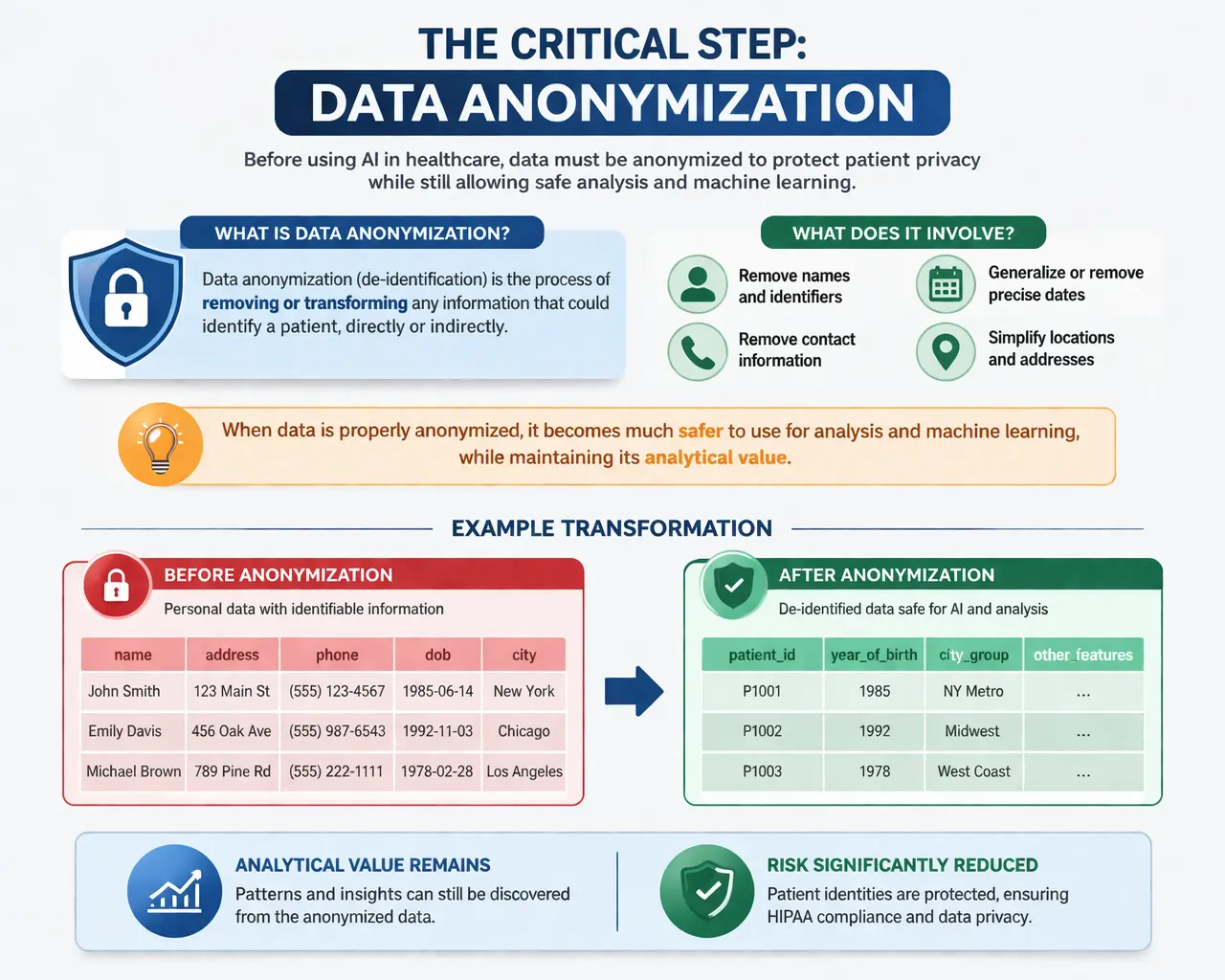

The critical step: data anonymization

Before using AI in healthcare, data must be anonymized. This process, also called de-identification, involves removing or transforming any information that could identify a patient. It includes removing names, addresses, phone numbers, identifiers, and precise dates. It also requires careful handling of data combinations that could reveal identity.

When data is properly anonymized, it becomes much safer to use for analysis and machine learning. A simple transformation illustrates this idea. A dataset that contains full personal details can be converted into one where individuals are represented by random identifiers, dates are generalized, and locations are simplified. The analytical value remains, but the risk is significantly reduced.

Using MLJAR Studio in a HIPAA-compliant workflow

MLJAR Studio is particularly useful for AI in healthcare because it supports a local-first approach. This means that your data stays within your environment. You can load datasets, perform exploratory analysis, train models, and generate reports without sending anything to external servers. This reduces risk and makes it easier to maintain control over sensitive data. A typical workflow in MLJAR Studio starts with loading a dataset, removing sensitive fields, and transforming the remaining data into a safe format. After that, you can use AutoML and visualization tools to generate insights.

import pandas as pd df = pd.read_csv("patients.csv") df = df.drop(columns=["name", "address", "phone", "email", "ssn"]) df["patient_id"] = ["P" + str(i) for i in range(len(df))] df["year_of_birth"] = pd.to_datetime(df["dob"]).dt.year df = df.drop(columns=["dob"])

You can also extend this workflow using tools and prompts available in MLJAR, for example, a prompt designed to check whether your dataset still contains identifiable information:

This helps ensure that your data is safe before further processing.

How to use external AI tools safely

External AI tools such as language models can still be very useful in healthcare workflows. They are especially helpful for generating summaries, explanations, and reports. However, they must be used carefully. The key rule is simple. Only anonymized data should be used with external AI tools. Raw patient data should never be shared outside your system. By following this rule, you can combine the power of AI with the requirements of HIPAA compliance.

Security requirements for HIPAA compliance

HIPAA compliance also requires strong security practices. Your system must ensure that only authorized users can access data. It must record who interacts with data and when. It must protect data through encryption, both when stored and when transmitted. It must also include backup and recovery mechanisms. These requirements are essential for maintaining confidentiality and integrity.

Local vs cloud in HIPAA-compliant AI systems

Choosing between local and cloud infrastructure has a major impact on compliance. A local approach, using tools like MLJAR Studio, gives you full control over your data and simplifies compliance. A cloud-based approach can also be compliant, but it requires additional legal agreements and careful configuration. For many teams, starting with a local-first architecture is the safest and simplest option.

FAQ: AI in healthcare and HIPAA compliance

Can you use AI in healthcare with HIPAA?

Yes, AI can be used in healthcare as long as the system is designed correctly and protected health information is properly handled and anonymized.

Is MLJAR Studio HIPAA compliant?

MLJAR Studio itself is not a HIPAA-compliant system, but it can be used as part of a HIPAA-compliant architecture when data is processed locally and handled correctly.

Can I send patient data to ChatGPT?

No, raw patient data should never be sent to external AI tools. Only anonymized data can be used safely.

What is the safest way to use AI in healthcare?

The safest approach is to keep sensitive data local, anonymize it before processing, and use tools like MLJAR Studio within a controlled environment.

Final thoughts

Many teams believe that AI in healthcare is too risky because of HIPAA. In reality, the risk does not come from AI itself. The risk comes from poor system design. When you separate sensitive data from processing, anonymize your datasets, and use tools like MLJAR Studio in a controlled environment, you can safely unlock the full potential of AI in healthcare. This is where real advantage appears. Not from avoiding AI, but from using it correctly.

Explore next

Continue with practical guides, tutorials, and product workflows for Python, AutoML, and local AI data analysis.

- AI Data Analyst

Analyze local data with conversational AI support and notebook-based, reproducible Python workflows.

- AutoLab Experiments

Run autonomous ML experiments, track iterations, and inspect results in transparent notebook outputs.

- MLJAR AutoML

Learn how to train, compare, and explain tabular machine learning models with open-source automation.

- Machine learning tutorials

Step-by-step guides for beginners and practitioners working with Python, AutoML, and data workflows.

Run AI for Machine Learning — Fully Local

MLJAR Studio is a private AI Python notebook for data analysis and machine learning. Generate code with AI, run experiments locally, and keep full control over your workflow.