What is Hyperparameter Tuning?

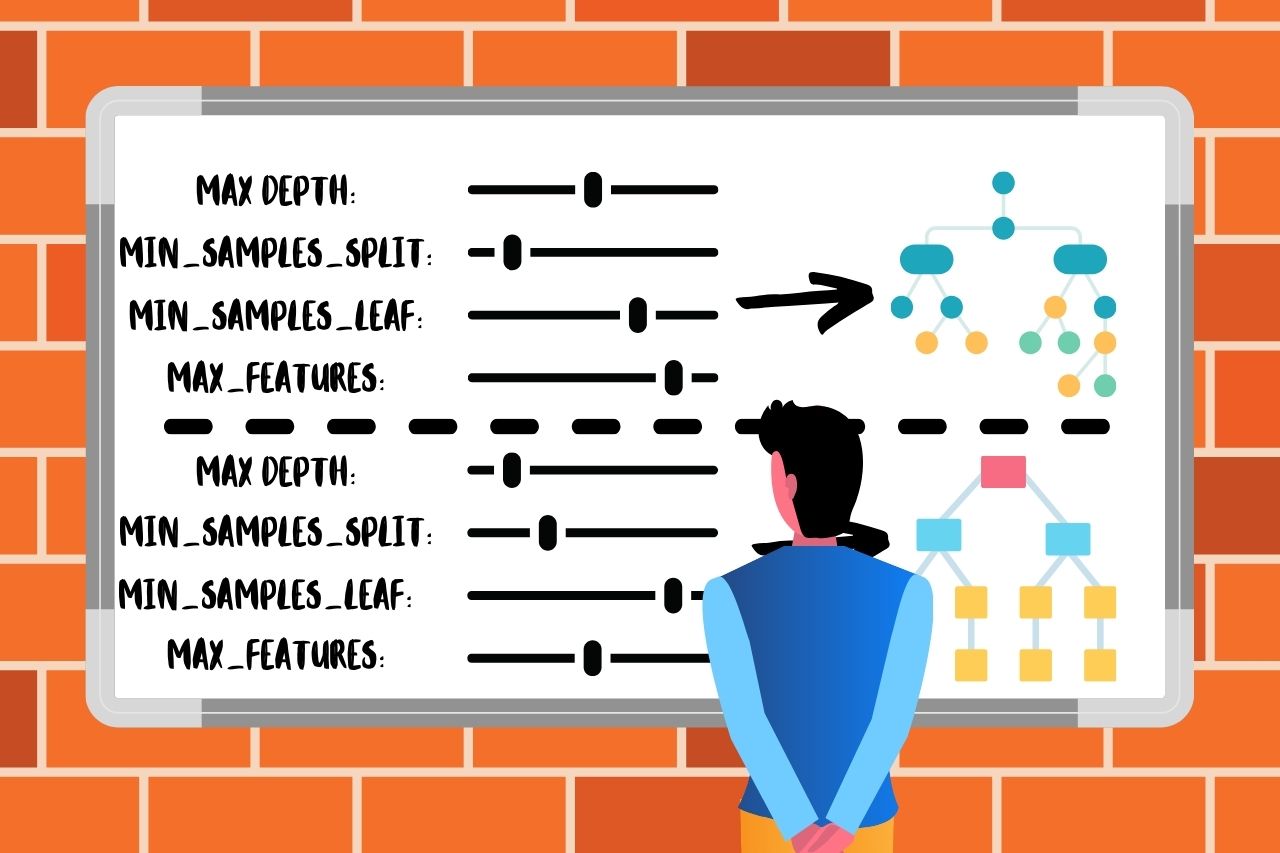

Hyperparameter Tuning in machine learning refers to the process of selecting the optimal set of hyperparameters for a learning algorithm. Hyperparameters are parameters that are not learned from the data but are set before the training process begins. Examples include the learning rate for training a neural network, the number of trees in a random forest, or the penalty parameter in a support vector machine.

Importance of Hyperparameter Tuning:

Proper Hyperparameter Tuning can significantly improve the performance of a machine learning model. It helps in:

- Improving model accuracy - Fine-tuning hyperparameters can lead to better performance on validation data, which usually translates to better performance on unseen test data.

- Reducing overfitting/underfitting - Proper selection of hyperparameters can help strike a balance between overfitting (model is too complex) and underfitting (model is too simple).

- Enhancing training efficiency - Optimal hyperparameters can speed up the training process and convergence.

When to Use Hyperparameter Tuning:

Hyperparameter Tuning is essential when:

- You're working on a new dataset and need to establish the best possible model performance.

- You're improving an existing model to achieve better accuracy or efficiency.

- You're trying different algorithms and need to optimize each for fair comparison.

- You're preparing a model for deployment and need to ensure robustness and reliability.

How Hyperparameter Tuning is Done:

There are several methods for Hyperparameter Tuning:

-

Grid Search:

- A systematic approach where you define a grid of possible hyperparameter values and evaluate all possible combinations.

- ✓ - Simple and exhaustive.

- ✗ - Computationally expensive and time-consuming, especially with a large number of hyperparameters.

- A systematic approach where you define a grid of possible hyperparameter values and evaluate all possible combinations.

-

Random Search:

- Instead of evaluating all combinations, it randomly samples a defined number of combinations.

- ✓ - More efficient than grid search, especially when some hyperparameters have less impact on performance.

- ✗ - May still miss the optimal combination.

- Instead of evaluating all combinations, it randomly samples a defined number of combinations.

-

Bayesian Optimization:

- Uses a probabilistic model to predict the performance of hyperparameters and selects the most promising ones to evaluate.

- ✓ - More efficient than grid and random search, often requires fewer evaluations to find optimal hyperparameters.

- ✗ - More complex to implement and requires careful tuning of the optimization process itself.

- Uses a probabilistic model to predict the performance of hyperparameters and selects the most promising ones to evaluate.

-

Gradient-Based Optimization:

- Uses gradient descent techniques to optimize hyperparameters, typically used in neural networks (e.g., learning rate schedules).

- ✓ - Efficient for continuous hyperparameters.

- ✗ - May get stuck in local minima.

- Uses gradient descent techniques to optimize hyperparameters, typically used in neural networks (e.g., learning rate schedules).

-

Evolutionary Algorithms:

- Uses techniques inspired by biological evolution, such as mutation, crossover, and selection, to evolve a population of hyperparameter sets.

- ✓ - Can escape local minima and handle complex, multimodal hyperparameter spaces.

- ✗ - Computationally intensive and requires careful parameterization of the evolutionary process.

- Uses techniques inspired by biological evolution, such as mutation, crossover, and selection, to evolve a population of hyperparameter sets.

-

Automated Machine Learning (AutoML):

- Automated processes that combine various techniques to search for the best model and hyperparameters.

- ✓ - Simplifies the tuning process, often leading to good results with minimal human intervention.

- ✗ - May be a black-box approach, making it harder to understand the model selection process.

- Automated processes that combine various techniques to search for the best model and hyperparameters.

Practical Steps for Hyperparameter Tuning:

-

Select a Validation Strategy:

- Choose a method to evaluate model performance (e.g., cross-validation) to ensure reliable estimation of hyperparameter performance.

-

Define a Hyperparameter Search Space:

- Identify the hyperparameters to tune and their possible values or ranges.

-

Choose a Tuning Method:

- Select one of the tuning methods described above based on the problem requirements and resources available.

-

Run the Tuning Process:

- Execute the tuning method, systematically or randomly searching through the hyperparameter space.

-

Evaluate and Select the Best Model:

- Compare the performance of models with different hyperparameter settings and select the one with the best validation performance.

-

Refine and Repeat:

- Optionally, refine the search space or tuning method based on initial results and repeat the tuning process.

Hyperparameter Tuning is a critical step in the machine learning pipeline that can make a significant difference in the final model's performance and generalization ability.

Exemple:

Here is an example of Python code that performs Hyperparameter Tuning on a linear regression model using GridSearchCV from the sklearn library.

import numpy as np

from sklearn.datasets import make_regression

from sklearn.linear_model import Ridge

from sklearn.model_selection import GridSearchCV

from sklearn.metrics import mean_squared_error

# Generate a synthetic regression dataset

X, y = make_regression(n_samples=100, n_features=10, noise=0.1, random_state=42)

# Define the model: Ridge Regression

model = Ridge()

# Define the hyperparameter space to search over

param_grid = {

'alpha': [0.1, 1.0, 10.0, 100.0], # Regularization strength

'solver': ['auto', 'svd', 'cholesky', 'lsqr', 'sag', 'saga'] # Different solvers to try

}

# Set up GridSearchCV to perform the hyperparameter tuning

grid_search = GridSearchCV(estimator=model, param_grid=param_grid, scoring='neg_mean_squared_error', cv=5, n_jobs=-1)

# Fit the GridSearchCV object to the data to find the best hyperparameters

grid_search.fit(X, y)

# Retrieve the best model and hyperparameters

best_model = grid_search.best_estimator_

best_params = grid_search.best_params_

# Print the best hyperparameters found

print("Best Hyperparameters:")

print(best_params)

# Make predictions using the best model

y_pred = best_model.predict(X)

# Calculate and print the mean squared error

mse = mean_squared_error(y, y_pred)

print("Mean Squared Error:", mse)

Explanation of the Code:

-

Data Preparation:

X, y = make_regression(n_samples=100, n_features=10, noise=0.1, random_state=42)- Generates a synthetic dataset for regression with 100 samples, each having 10 features, and some noise.

-

Model Definition:

model = Ridge()- Initializes a Ridge Regression model.

-

Hyperparameter Grid:

param_grid = { 'alpha': [0.1, 1.0, 10.0, 100.0], 'solver': ['auto', 'svd', 'cholesky', 'lsqr', 'sag', 'saga'] }- Specifies the hyperparameters and their values to be explored:

alpha(regularization strength) andsolver(algorithm used for optimization).

- Specifies the hyperparameters and their values to be explored:

-

Grid Search Setup:

grid_search = GridSearchCV(estimator=model, param_grid=param_grid, scoring='neg_mean_squared_error', cv=5, n_jobs=-1)- Creates a

GridSearchCVobject with 5-fold cross-validation, using negative mean squared error as the scoring metric.n_jobs=-1allows parallel computation.

- Creates a

-

Fitting the Grid Search:

grid_search.fit(X, y)- Trains the model using all combinations of hyperparameters and finds the best combination based on the specified scoring metric.

-

Best Model and Hyperparameters:

best_model = grid_search.best_estimator_ best_params = grid_search.best_params_ print("Best Hyperparameters:") print(best_params)- Retrieves and prints the best hyperparameters and the corresponding model.

-

Model Evaluation:

y_pred = best_model.predict(X) mse = mean_squared_error(y, y_pred) print("Mean Squared Error:", mse)- Makes predictions on the training data using the best model and calculates the mean squared error to evaluate the model's performance.

This example demonstrates how to use GridSearchCV to perform Hyperparameter Tuning for a linear regression model in sklearn.

5 Pros and Cons of Hyperparameter Tuning:

Advantages:

-

Improved Model Performance:

- Hyperparameter Tuning can significantly enhance the performance of a model by finding the optimal settings that lead to better accuracy, precision, recall, or other relevant metrics.

-

Better Generalization:

- Proper tuning helps in finding a balance between underfitting and overfitting, leading to models that generalize well on unseen data.

-

Automated Optimization:

- Tools like GridSearchCV and RandomizedSearchCV automate the process, making it easier and more efficient to explore different hyperparameter configurations.

-

Enhanced Understanding:

- The process of tuning can provide insights into the model's behavior and the importance of different hyperparameters, leading to better model understanding and refinement.

-

Efficiency in Training:

- Optimal hyperparameters can reduce training time and improve the convergence speed of the model.

Disadvantages:

-

Computationally Intensive:

- The process can be very time-consuming and require significant computational resources, especially with large datasets and complex models.

-

Complexity:

- The tuning process can become complex, particularly when dealing with many hyperparameters and a large search space.

-

Risk of Overfitting:

- There is a risk of overfitting the hyperparameters to the validation set, leading to a model that performs well on validation data but poorly on new, unseen data.

-

Diminishing Returns:

- After a certain point, further tuning may yield only marginal improvements in performance, not justifying the additional computational cost.

-

Requires Expertise:

- Effective Hyperparameter Tuning often requires a good understanding of the model and the hyperparameters involved, making it challenging for beginners.

Literature:

-

"Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow" by Aurélien Géron - This book provides practical guidance on implementing and tuning machine learning models using popular Python libraries. It includes chapters on Hyperparameter Tuning techniques such as Grid Search and Random Search.

-

"The Elements of Statistical Learning" by Trevor Hastie, Robert Tibshirani, and Jerome Friedman - This book is a foundational text in machine learning and statistics, discussing various methods for model selection and tuning.

-

"Pattern Recognition and Machine Learning" by Christopher M. Bishop - A comprehensive textbook that covers a range of machine learning topics, including the theory behind Hyperparameter Tuning and model selection.

Conclusions:

Hyperparameter Tuning is a critical process in machine learning that involves selecting the optimal set of hyperparameters to enhance model performance. By carefully tuning these parameters, practitioners can significantly improve model accuracy, generalization, and efficiency. Techniques such as Grid Search, Random Search, and Bayesian Optimization are commonly used to automate and streamline this process.

However, Hyperparameter Tuning also comes with challenges, including computational complexity, risk of overfitting, and the need for expert knowledge. Despite these challenges, the benefits often outweigh the drawbacks, making Hyperparameter Tuning an essential step in the machine learning pipeline.

In summary, effective Hyperparameter Tuning can be the difference between a good model and a great one, making it a crucial skill for anyone involved in developing machine learning models.

MLJAR Glossary

Learn more about data science world

- What is Artificial Intelligence?

- What is AutoML?

- What is Binary Classification?

- What is Business Intelligence?

- What is CatBoost?

- What is Clustering?

- What is Data Engineer?

- What is Data Science?

- What is DataFrame?

- What is Decision Tree?

- What is Ensemble Learning?

- What is Gradient Boosting Machine (GBM)?

- What is Hyperparameter Tuning?

- What is IPYNB?

- What is Jupyter Notebook?

- What is LightGBM?

- What is Machine Learning Pipeline?

- What is Machine Learning?

- What is Parquet File?

- What is Python Package Manager?

- What is Python Package?

- What is Python Pandas?

- What is Python Virtual Environment?

- What is Random Forest?

- What is Regression?

- What is SVM?

- What is Time Series Analysis?

- What is XGBoost?