What is Gradient Boosting Machine (GBM)?

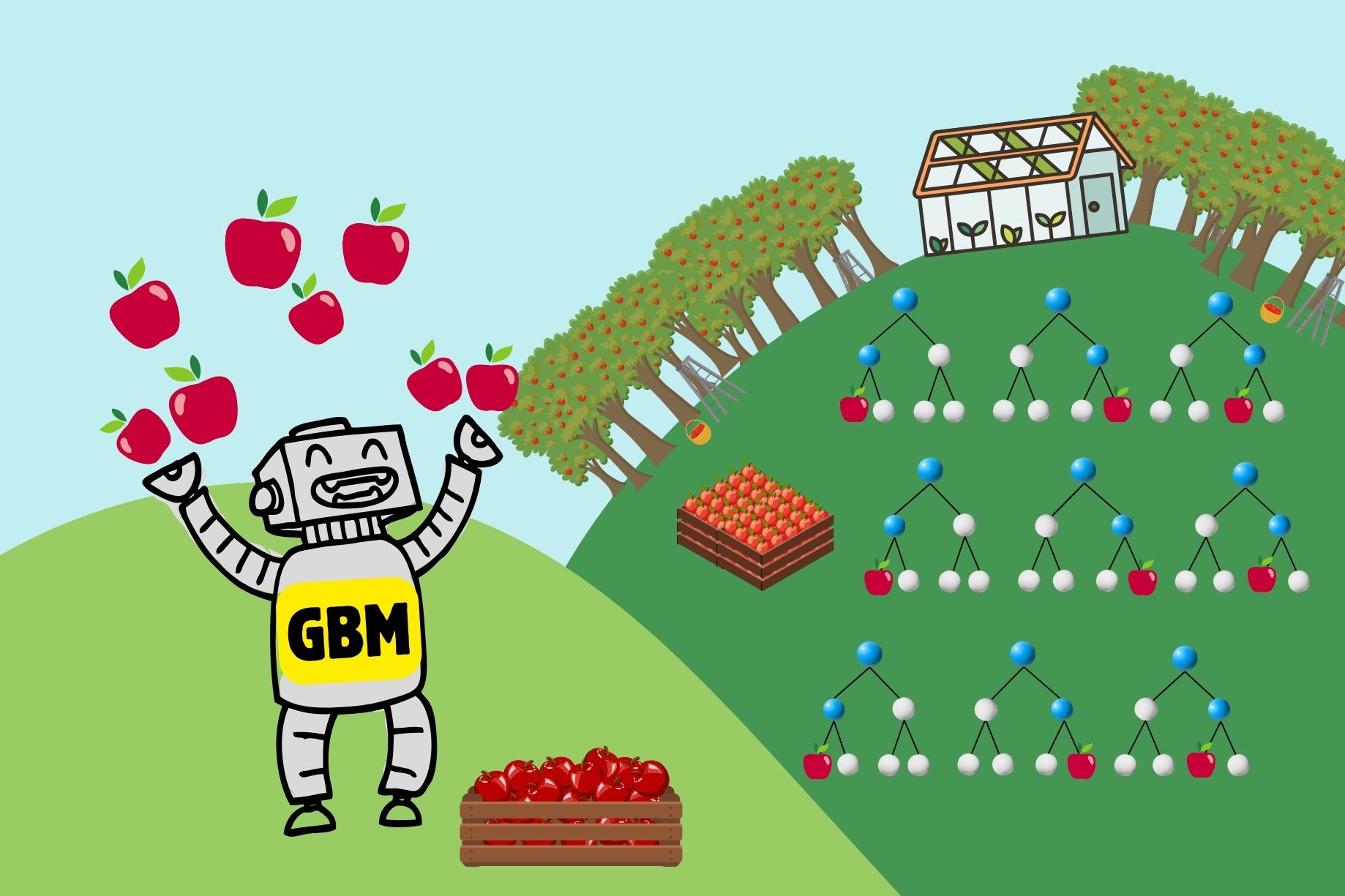

In machine learning, a GBM (Gradient Boosting Machine) is an ensemble learning method that builds models in a sequential manner, primarily used for regression and classification tasks.

Key Concepts:

-

Boosting - Boosting is an ensemble technique that combines the predictions of several base models (typically weak learners, like decision trees) to improve overall performance. It works by training models sequentially, where each new model tries to correct the errors made by the previous ones.

-

Gradient Boosting - Gradient Boosting is a specific type of boosting that uses gradient descent to minimize the loss function. The loss function measures how well the model's predictions match the actual data, and gradient descent is a method to find the minimum of a function by iteratively moving towards the steepest descent as defined by the gradient of the loss function.

-

Weak Learners - In the context of GBM, weak learners are usually shallow decision trees. These trees are called weak because they typically perform slightly better than random guessing.

-

Sequential Training - Models are trained one after another, and each new model focuses on the errors (residuals) of the previous model. The idea is to reduce these errors gradually.

How GBM Works:

-

Initialization: The process starts by initializing the model with a simple prediction, often the mean of the target variable for regression or a base guess for classification.

-

Iterative Training:

- Calculate Residuals - For each iteration, calculate the residuals (errors) between the actual values and the predictions made by the current model.

- Fit a New Model - Train a new model on these residuals. This new model aims to predict the residuals (errors) of the current model.

- Update the Model - The predictions of the new model are added to the existing model’s predictions to improve accuracy.

- Adjust Step Size - Often, a learning rate is applied to control how much the new model’s predictions influence the final predictions, helping to prevent overfitting.

-

Final Prediction: After a specified number of iterations, the models' combined predictions form the final output.

GBM in sckit-learn:

# Import necessary libraries

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.ensemble import GradientBoostingRegressor

from sklearn.metrics import mean_squared_error

# Generate a synthetic dataset

from sklearn.datasets import make_regression

X, y = make_regression(n_samples=1000, n_features=20, noise=0.1, random_state=42)

# Split the dataset into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Initialize the Gradient Boosting Regressor

gbm = GradientBoostingRegressor(n_estimators=100, learning_rate=0.1, max_depth=3, random_state=42)

# Train the model

gbm.fit(X_train, y_train)

# Make predictions

y_pred = gbm.predict(X_test)

# Evaluate the model

mse = mean_squared_error(y_test, y_pred)

print(f"Mean Squared Error: {mse:.2f}")

# Optional: Feature Importance

import matplotlib.pyplot as plt

feature_importance = gbm.feature_importances_

sorted_idx = np.argsort(feature_importance)

plt.barh(range(len(sorted_idx)), feature_importance[sorted_idx], align='center')

plt.xlabel("Feature Importance")

plt.ylabel("Feature Index")

plt.show()

Explanation

-

Import Libraries: Import the necessary libraries, including

numpy,pandas, andscikit-learncomponents. -

Generate Dataset: Use

make_regressionto create a synthetic dataset for a regression task. -

Split Dataset: Split the dataset into training and testing sets using

train_test_split. -

Initialize GBM: Create an instance of

GradientBoostingRegressorwith specified parameters such asn_estimators,learning_rate, andmax_depth. -

Train Model: Fit the model on the training data.

-

Make Predictions: Use the trained model to make predictions on the test data.

-

Evaluate Model: Calculate the Mean Squared Error (MSE) to evaluate the model's performance.

-

Feature Importance: Optionally, plot the feature importance to understand which features are most influential in the predictions.

History of a GBM:

The history of Gradient Boosting Machines (GBMs) is closely tied to the broader development of boosting methods in machine learning. The concept of boosting was first introduced in the late 1980s by Robert Schapire, who proposed the idea of combining multiple weak learners to create a strong classifier. This concept was further developed by Yoav Freund and Robert Schapire in the 1990s with the introduction of the AdaBoost algorithm, which became a foundational method in ensemble learning.

Building on the principles of AdaBoost, Jerome H. Friedman introduced the concept of gradient boosting in his seminal 1999 paper "Greedy Function Approximation: A Gradient Boosting Machine." Friedman extended the idea of boosting by applying gradient descent optimization to minimize a specified loss function, thereby improving the model's accuracy. This innovation allowed gradient boosting to be used for a wide range of predictive modeling tasks, including both regression and classification.

In 2002, Friedman published a follow-up paper, "Stochastic Gradient Boosting," which introduced stochastic elements into the gradient boosting process. By incorporating randomness, this variant helped to improve the robustness of the algorithm and reduce the risk of overfitting. The introduction of stochastic gradient boosting marked a significant advancement, making the technique more practical and widely applicable.

Over the years, several optimized implementations of gradient boosting have been developed, further popularizing the technique. Notably, XGBoost, introduced by Tianqi Chen in 2016, brought significant improvements in speed and scalability, making it a favorite among data scientists and machine learning practitioners. Following XGBoost, LightGBM and CatBoost emerged as prominent gradient boosting frameworks, each introducing unique enhancements such as histogram-based decision trees and better handling of categorical features.

Today, gradient boosting remains a powerful and versatile tool in the machine learning arsenal, widely used in various domains from finance to healthcare, and continues to be an area of active research and development.

Pros and Cons:

Advantages:

-

High Performance - GBM often achieves high accuracy and strong predictive performance.

-

Flexibility - It can handle various types of data and loss functions, making it versatile for different tasks.

Disadvantages:

-

Computationally Intensive - Training can be slow, especially with large datasets.

-

Overfitting - GBM can overfit if the number of iterations is too high or if the models are too complex.

-

Hyperparameter Tuning - Requires careful tuning of hyperparameters, such as learning rate, number of iterations, and tree depth.

Common Implementations:

-

XGBoost - An optimized and scalable implementation of gradient boosting.

-

LightGBM - A highly efficient gradient boosting framework that uses a histogram-based approach.

-

CatBoost - Gradient boosting for categorical features, designed to be fast and handle categorical data efficiently.

Literature:

-

Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems 2nd Edition - This practical guide covers various machine learning algorithms, including gradient boosting, with hands-on examples and code implementations.

-

"Greedy Function Approximation: A Gradient Boosting Machine" by Jerome H. Friedman - This seminal paper introduces the concept of gradient boosting and provides the theoretical foundation for GBM. Friedman explains the algorithm and its mathematical underpinnings in detail.

Conclusions:

Gradient Boosting Machines (GBMs) represent a significant advancement in machine learning, leveraging the power of ensemble learning to build robust and accurate predictive models. Originating from the broader concept of boosting, GBMs were notably advanced by Jerome H. Friedman, who introduced the idea of using gradient descent to optimize model performance iteratively.

Over the years, GBMs have evolved through various enhancements, including stochastic elements and optimized implementations like XGBoost, LightGBM, and CatBoost. These developments have made GBMs a go-to choice for many regression and classification tasks, known for their high performance and flexibility.

Despite their computational intensity and the need for careful hyperparameter tuning, GBMs are celebrated for their ability to handle complex datasets and deliver superior predictive accuracy. Their ongoing refinement and application across diverse fields highlight their enduring relevance and potential. As machine learning continues to evolve, GBMs remain a cornerstone technique, exemplifying the power of combining multiple models to achieve greater predictive prowess.

MLJAR Glossary

Learn more about data science world

- What is Artificial Intelligence?

- What is AutoML?

- What is Binary Classification?

- What is Business Intelligence?

- What is CatBoost?

- What is Clustering?

- What is Data Engineer?

- What is Data Science?

- What is DataFrame?

- What is Decision Tree?

- What is Ensemble Learning?

- What is Gradient Boosting Machine (GBM)?

- What is Hyperparameter Tuning?

- What is IPYNB?

- What is Jupyter Notebook?

- What is LightGBM?

- What is Machine Learning Pipeline?

- What is Machine Learning?

- What is Parquet File?

- What is Python Package Manager?

- What is Python Package?

- What is Python Pandas?

- What is Python Virtual Environment?

- What is Random Forest?

- What is Regression?

- What is SVM?

- What is Time Series Analysis?

- What is XGBoost?