AI Ethics and Responsible Data Science: Building Fair and Private Machine Learning Systems

AI Ethics and Responsible Data Science: Building Fair and Private Machine Learning Systems

AI ethics refers to the principles and practices that ensure artificial intelligence systems are fair, transparent, accountable, and respectful of user privacy. Artificial Intelligence and machine learning systems are becoming part of everyday life. They help detect fraud, recommend products, diagnose diseases, and automate many business decisions. However, as these systems become more powerful, an important question arises:

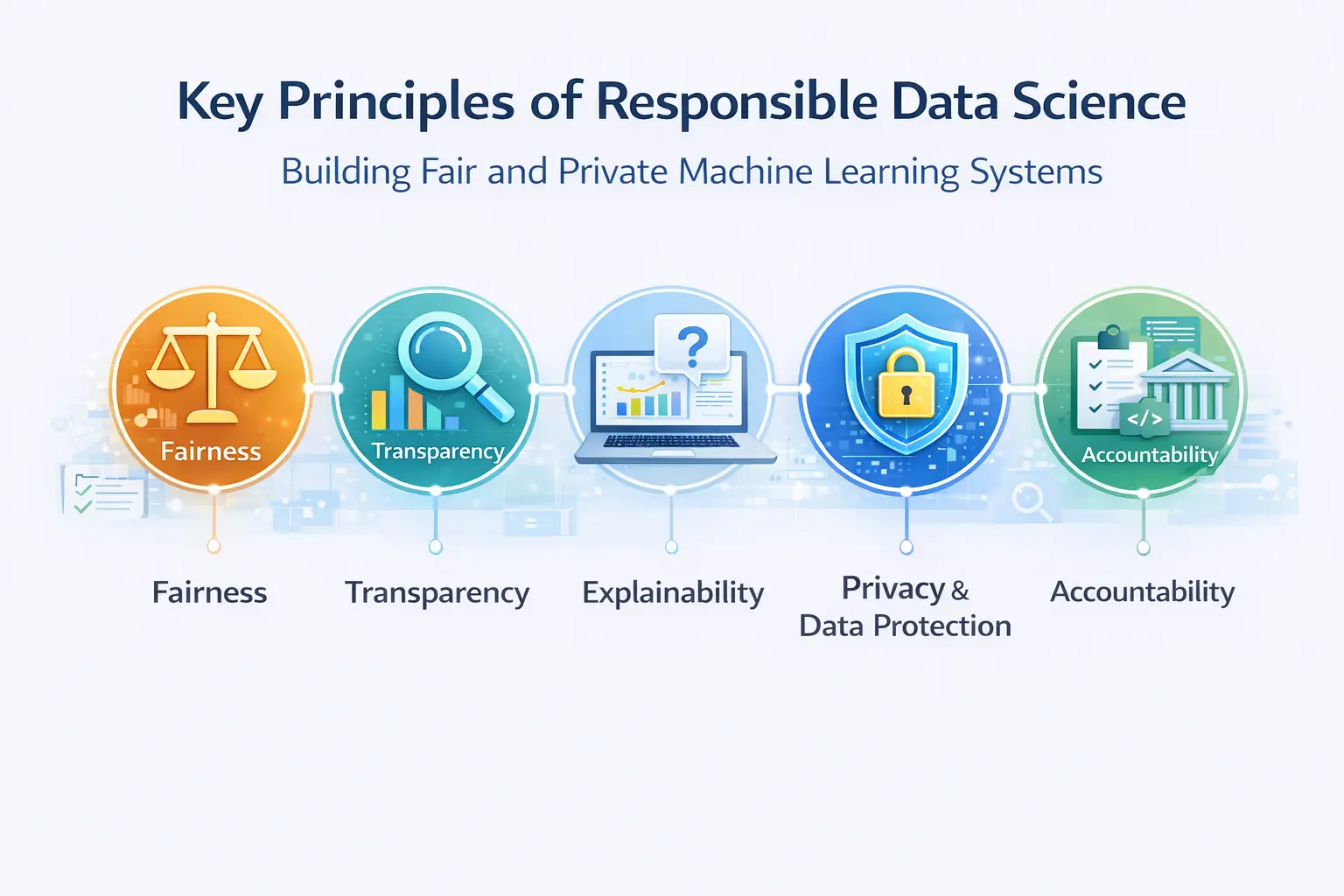

How can we ensure that AI systems are ethical, fair, and responsible? AI models can unintentionally introduce bias, discriminate against certain groups, or expose sensitive data if they are not designed carefully. Responsible data science focuses on building machine learning systems that are:

- fair

- transparent

- explainable

- privacy-preserving

- accountable

In this article, we explore the principles of AI ethics and responsible data science, and discuss tools that help data scientists build more trustworthy machine learning systems.

Why AI Ethics Matters

Machine learning models learn patterns from data. If the data used to train a model contains bias or imbalance, the model may reproduce or even amplify these biases. Examples of AI bias have appeared in several domains:

- hiring algorithms that disadvantage certain demographics,

- facial recognition systems that perform worse on underrepresented groups,

- loan approval systems that unintentionally discriminate.

These issues highlight the importance of ethical AI design. Responsible data science aims to identify these problems early and build systems that treat individuals and groups fairly.

Key Principles of Responsible Data Science

Several core principles guide ethical AI development.

Fairness

Fairness in machine learning means that models should produce outcomes that do not systematically disadvantage certain groups. For example, a loan approval model should not unfairly reject applicants based on demographic characteristics such as gender or ethnicity. Evaluating fairness typically involves measuring how predictions differ across groups. Modern machine learning tools increasingly include fairness metrics to help detect potential bias. For example, MLJAR AutoML includes built-in fairness evaluation that helps data scientists identify whether model predictions behave differently across sensitive groups. By measuring fairness during model evaluation, developers can detect bias early and improve model design.

Transparency

Transparency means that the behavior of AI systems should be understandable to developers and stakeholders. Black-box models can make decisions that are difficult to interpret, which makes it harder to detect errors or biases. Responsible machine learning systems should provide insight into:

- how models make predictions,

- which features influence decisions,

- how models behave on different datasets.

Visualization tools and explainability techniques play an important role in achieving transparency.

Explainability

Explainability focuses on understanding why a model makes a specific prediction. This is especially important in sensitive domains such as healthcare, finance, or legal systems. For example, if a model rejects a loan application, the system should be able to explain which factors contributed to the decision. Explainable AI techniques help provide these insights and increase trust in machine learning systems.

Privacy and Data Protection

Many AI systems rely on large datasets that may contain sensitive information. Protecting user privacy is therefore a critical component of responsible data science. Organizations must ensure that:

- personal data is protected,

- data is not exposed unintentionally,

- sensitive information is handled securely.

One of the biggest concerns with modern AI tools is that they often rely on cloud-based services where data may be transmitted to external servers. For some organizations, this creates privacy and compliance challenges.

Building Privacy-First Machine Learning Workflows

One way to address these concerns is by using tools that allow machine learning development to happen locally. MLJAR Studio is a desktop environment designed for data science and machine learning that prioritizes privacy and control over data. Unlike many cloud-based platforms, MLJAR Studio can run entirely on a local machine. This means:

- datasets remain on your computer,

- sensitive information is not transmitted externally,

- experiments can be performed offline.

The desktop environment also allows users to install and run private large language models (LLMs) locally. This is particularly valuable for organizations that want to use AI assistants without sending data to external providers. Because the entire environment can operate offline, MLJAR Studio enables private and secure machine learning workflows.

Detecting Bias in Machine Learning Models

Detecting bias is an important step in responsible machine learning. Bias can appear in several ways:

- imbalanced datasets,

- historical biases reflected in data,

- feature selection issues,

- model optimization choices.

To reduce bias, data scientists often perform additional evaluation beyond standard accuracy metrics. This may include analyzing model predictions across different groups or measuring fairness metrics.

Tools like MLJAR AutoML help automate model training and evaluation while also supporting fairness analysis.

Project page: https://github.com/mljar/mljar-supervised

Automated evaluation allows data scientists to quickly detect potential issues and improve models before deployment.

Responsible Deployment of Machine Learning Models

Responsible AI development does not end after training a model. Deployment introduces new challenges, including:

- monitoring model behavior over time,

- detecting drift in data distributions,

- ensuring predictions remain fair and reliable.

Responsible data science practices include continuous monitoring of model performance and fairness metrics. By regularly evaluating models in production, organizations can ensure that systems remain reliable and ethical.

Responsible AI in Practice

Building ethical AI systems requires both technical tools and responsible design decisions. Best practices include:

- auditing training datasets,

- measuring fairness metrics,

- using explainability techniques,

- protecting sensitive data,

- monitoring models after deployment.

These practices help ensure that machine learning systems serve society in a fair and responsible way.

AI Regulations and Responsible AI

As artificial intelligence systems become more common in finance, healthcare, hiring, and public services, governments are introducing regulations to ensure that AI is developed and used responsibly.

One of the most important initiatives is the European Union AI Act, which classifies AI systems based on their level of risk. High-risk systems—such as those used in credit scoring, recruitment, or healthcare—must meet strict requirements related to transparency, fairness, and accountability. Other organizations, including the OECD and international AI governance bodies, are also developing frameworks that encourage ethical AI development. These frameworks emphasize responsible data use, bias detection, model explainability, and protection of user privacy.

For data scientists and machine learning engineers, this means that building accurate models is no longer the only goal. Increasingly, teams must also demonstrate that their systems are fair, transparent, and compliant with responsible AI guidelines. Tools that support fairness evaluation, explainability, and privacy-preserving workflows—such as MLJAR AutoML and MLJAR Studio—can help teams develop machine learning systems that align with modern AI regulations while keeping sensitive data secure.

Tools for Responsible Machine Learning

Several tools help data scientists build ethical AI systems:

- MLJAR Studio — private machine learning environment with AI assistant,

- MLJAR AutoML — automated training with fairness evaluation,

- SuperTree — interpretable decision tree visualization tools,

- MLJAR Mercury — sharing models and results through web applications,

Checklist for Responsible Data Science

Responsible Data Science Checklist. When building machine learning systems, data scientists should consider the following checklist:

- audit training datasets for bias,

- evaluate fairness metrics across sensitive groups,

- ensure model predictions are explainable,

- protect sensitive data and maintain privacy,

- monitor models after deployment.

Common Questions About Responsible AI

What is AI ethics?

AI ethics refers to principles and practices that ensure artificial intelligence systems are designed and used responsibly. This includes fairness, transparency, privacy protection, and accountability.

What is fairness in machine learning?

Fairness refers to ensuring that machine learning models do not systematically disadvantage certain groups of people. Fairness metrics help measure whether predictions differ across groups.

Why is privacy important in data science?

Data science often involves sensitive information such as personal data or financial records. Protecting this data is essential to maintain trust and comply with regulations.

Can machine learning workflows run offline?

Yes. Tools like MLJAR Studio allow machine learning workflows to run entirely on a local computer without sending data to external services.

Summary

Artificial Intelligence has enormous potential to improve decision-making and automate complex tasks. However, with this power comes responsibility. Responsible data science requires careful attention to fairness, transparency, explainability, and privacy. By combining ethical principles with modern tools such as MLJAR Studio and MLJAR AutoML, developers can build machine learning systems that are both powerful and trustworthy. The goal of responsible AI is not only to build better models, but also to ensure that technology benefits everyone.

AI Data Analyst on Your Computer

Use MLJAR Studio to explore data, find insights, and create reports with AI. Everything runs locally, so your data stays with you.

About the Author

Related Articles

- Private PyPI Server: How to Install Python Packages from a Custom Repository

- Essential Python Libraries for Data Science (2026 Guide)

- Statistics for Data Science: Essential Concepts

- Machine Learning Basics: Beginner’s Guide 2026

- Machine Learning vs AI vs Data Science: What’s the Difference? (Complete Guide)

- Local vs Cloud Data Processing: Security, Privacy, and Private AI Workflows

- AutoResearch by Karpathy and the Future of Autonomous AI Research

- Complete Guide to Offline Data Analysis 2026

- Data Analysis Software for Pharmaceutical Research

- AI Coding Assistants for Data Science: Complete 2026 Comparison