Sep 27 2024 · Maciej Małachowski

TabNet vs XGBoost

Compare TabNet vs XGBoost on tabular machine learning tasks, with practical benchmarks, tuning insights, and implementation guidance.

Compare TabNet vs XGBoost on tabular machine learning tasks, with practical benchmarks, tuning insights, and implementation guidance.

Learn 4 effective ways to visualize XGBoost trees in Python, from built-in plotting to detailed tree inspection workflows.

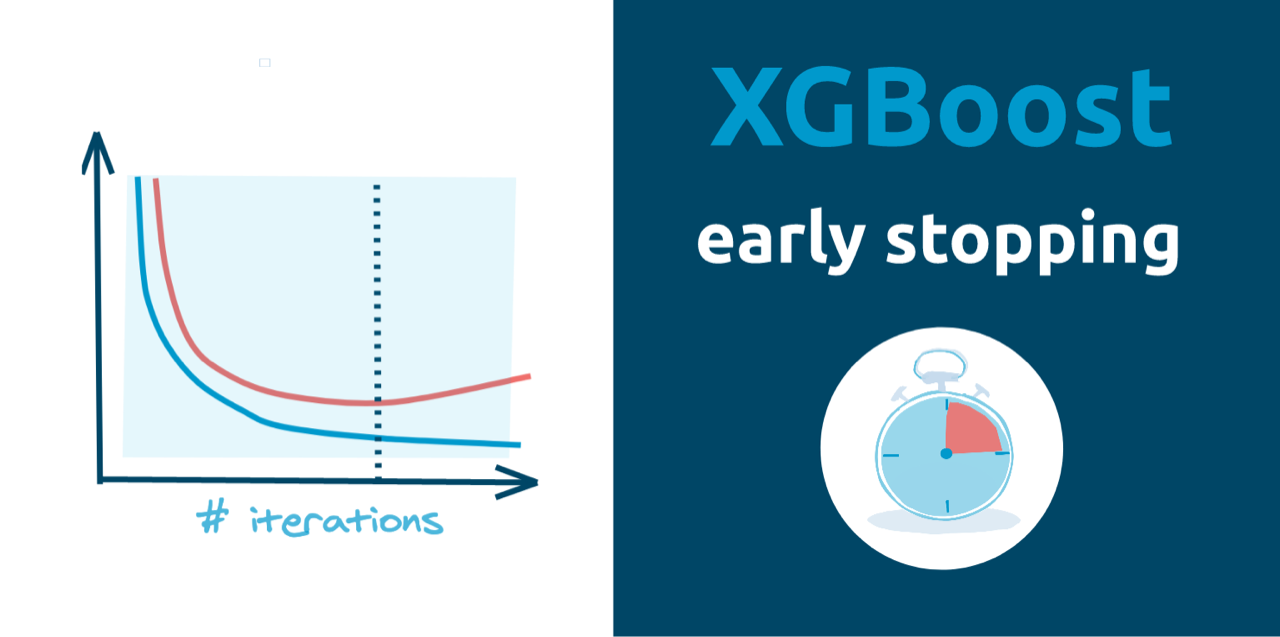

Learn how to implement Xgboost early stopping in Python to find the optimal number of trees during model training. Prevent underfitting or overfitting with this powerful gradient boosting framework.

Learn how to save and load XGBoost models in Python safely, with practical methods for reproducible ML workflows.

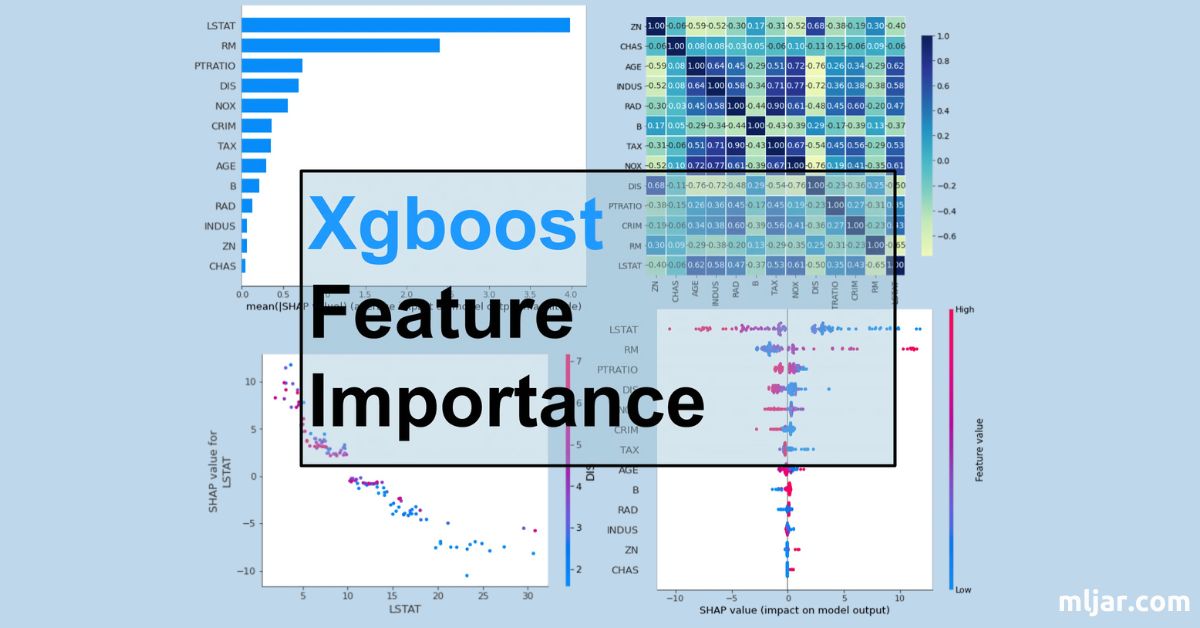

To compute and visualize feature importance with Xgboost in Python, the tutorial covers built-in Xgboost feature importance, permutation method, and SHAP values.